The Decentralised Data Stack

A Look at Trends in Decentralising & Managing Blockchain Data

Welcome back to MondayMunday! Today I wanted to cover the data stack in blockchains, after cover Luabase, I noticed and acceleration of this trend and the creation of decentralised and centralised primitives (based of existing data tools) designed to make data management in Web3 easier. This weeks post aims to uncover said tools and how they could be positioned in a Web3 data stack… To the post!

Don’t forget to subscribe and share if you enjoyed it! P.s. I’ve added an Airtable of all the companies featured and some more in the exciting space. If you know any I missed, please let me know!

Data ownership and transfer has fast become one of the key problems to be solved when it comes to product building. The rise of social media platforms misusing data, as seen with the Cambridge Analytica scandal and regulations like GDPR specifying controls over data ownership has led to the need to build solutions to solve data misuse. It appears we are entering a world were user owned data and decentralisable data management can emerge as a solution to these problems as we move from reading and writing data to and from central servers to users owning data in decentralised architecture.

Given this, blockchains provide a useful tool to immutably store, transfer data and create marketplaces allowing users to monetise their data. We are seeing the practical benefit with new models for decentralising storage, data compute and data access to remove centralised databases, cloud platforms and serverless architecture. This is the decentralisation of that traditional infrastructure that Web 2 was built on and represents a shift for application building. We see this in practise with user applications such a wallet aggregators (e.g. Zerion and Zapper), Decentralised social protocol (e.g. Lens) and other dApps which allow users to port over their data to use them.

However, as is the case with decentralised technologies, it can also lead to numerous engineering and development challenges. Developer tooling has arisen to make the Web3 data stack easier, but at the cost of decentralisation.

As we see the rise of crypto and DeFi proliferate modern products. A new tool kit for managing and reading on-chain data will need to emerge. We are seeing the start of this with a centralised and decentralised components being built to manage data in the blockchain stack.

An overview of the a traditional data stack

As mentioned in my blog on Luabase, the rise of the modern data stack has arisen from the transition to low cost cloud computing from the arrival of AWS and rise of cloud data providers. This led to the rise of a whole industry around managing data infrastructure.

The advancement in tooling for managing large datasets from the transition from Hadoop to solutions like Redshift has led to a new toolkit for managing data in organisation from ingesting and storing data, managing workflows of data and cleaning and synthesising data to be analyse and used in application.

At a high level we can we can break down the modern data stack as the process of:

Identifying data sources

This refers to generating and sourcing relevant business and operational data for your productExtracting and Loading data to relevant location

This refers to extracting this and transporting it to a location where is can be safely and securely analysed

Storing Data

Given the vast amounts of data generated, data stacks need robust storage mechanisms for their data.

Transforming and Querying

Unstructured data needs to be structured and queried to generate business value.

Analysis and Output

Data can then be used to drive business decisions by being visualised or analysed in other ways.

Below we can simplify the data framework to the following parts.

In the world of web3 we are seeing a similar stack emerge for managing data, below we take a look at each component and the tools used to manage it with blockchain data.

Data Sources

In traditional business data is user generated and often come from ‘product databases’, these are databases used for organising the data in your software. For example, user data such as emails and addresses when user signs-up. SaaS products, such as Salesforce and Stripe, provide data on sales and revenue figures. Furthermore, if you want to capture event data, such as user clicks, new tools like Segment are allowing us to do this.

In blockchain based systems, data is immutably stored on the ledger and this provides data around all ‘product’ data we have in traditional product systems. As information such as users and wallet addresses and event data.

However, as we reach a world where blockchains combine with real world data. Solutions have been created to bring off-chain data onto blockchains, this is required as blockchain applications may require real world data, such as price data, to execute a smart contract. This has led to the creation of ‘oracle’ solutions to provide decentralised external data sources to blockchains.

Additionally, we have see novel ways using marketplaces models with tokens to effectively pool user data and monetise these data sets for use in applications. These services called data-unions creates a new model to gain large data sets on blockchain users.

Companies

Oracles: Chainlink, Band, Pyth, API3, UMA, DIA Data, Flux, Redstone

Data Unions: Ocean Protocol, DataUnion, Pool, Streamr

Extracting = Node access

Traditional methods of extracting refer typically to tools which orchestrate and replicate data from these sources into databases.

In blockchains, the data is stored on a global universal ledger which uses decentralised network of nodes to verify transactions. Given this, each node has access to the full record of data on the blockchain. To extract this data you can either run a node and extract the data or use an API / RPC service to make calls to the node to gather the data.

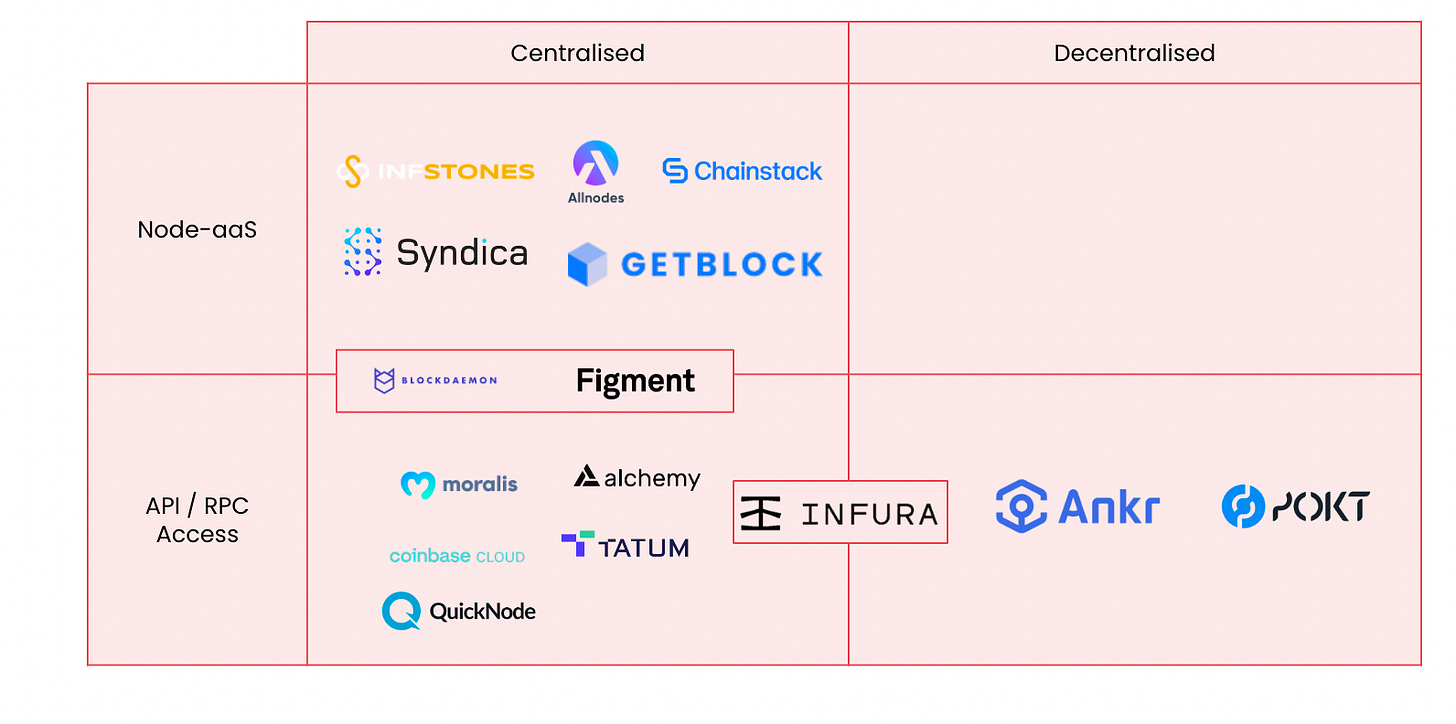

Whilst nodes providers and APIs solutions have traditionally been centralised, meaning one service provider maintains the infrastructure and service. We have seen decentralised alternatives emerge. For example, Ankr and Pokt are decentralised node providers, users pay native tokens to make RPC / API calls to nodes and individuals are incentivised to run nodes by staking tokens to participate in running the infrastructure for a share of the revenue stream. We have also recently seen Infura announced they will create a decentralised service for their API calls.

Companies: Infura, Alchemy, Syndica, Quicknode, Ankr, Poket Network, InfStone, NowNodes, WatchData, Moralis, Chainstack, GetBlock, Blockdeamon, Figment

Loading and transforming data = Indexing

Given the size of blockchain and the data contained, it is time consuming and difficult to quickly access data of previous transaction. This is where indexing services play apart, they store and indexing the network so users can quickly access relevant queries. This is essential for performant data recollection to build fast application.

Again with indexing services we are seeing a trend of centralisation vs decentralisation. Services such as The Graph are building tokenomic models around paying for queries in native tokens and incentivising participants to provide infrastructure through staking rewards.

These services provide the ability to query the data using a query language. We see a further classification of services, with SQL vs GraphQL based querying tools. GraphQL has become an ever popular query language since its open sourcing in 2015, however, SQL remains the the tool of choice for data science and we are seeing a large number of enterprise grade solutions allowing users to query data using SQL.

Companies: Bitquery, Goldsky, Luabase, The Graph, Allium, Probably Nothing Labs, Kyve, SpaceAndTime.

Storage

Whilst the blockchain indexing functions we have looked have their own storage solutions for managing the indexing of blockchain. This can be using a native protocol to store queries such as The Graph or using traditional data warehouses to store queries.

We have also see the rise of decentralised storage options, these mechanism allow users to store any data in a decentralised fashion by encrypting data and storing it peer-to-peer manner so data is always accessible. As the network grows, more users will be hosts of data and the protocol allowing data persistence though data being stored in multiple places.

Tokenomic models on top of this storage mechanism such as Sia, Areweave, Filecoin, Storj have created mechanisms to incentivise the provision of storage by providing reward to those offering hosting, with staking of a set amount of tokens required to ensure they are incentivised to store data correctly and maintain the network.

As these persistent decentralised data stores have emerged, we have seen solutions developed on top to provide ways to manage data storage and make data useable in a decentralised data stack.

For example, a few players have built on this stack to create easy storage access, players like Filebase, Web3.storage allow users to use traditional services like HTTP, API and tools designed for Amazon S3 databases to manage stored data.

Additionally, we have seen solutions like Pinata and Ceramic provide new mechanisms for interacting data such as NFT data in the case of Pinata and user ‘data streams’ as is the case with Ceramic.

These top layers of the storage stack allow other data to be stored in a decentralised fashion and accessed for analysis.

Analysis and Output

Following the transforming of data, it can now be used in applications, these are services which make use of data to allow users to make insights, visualise data and add them to product insights.

Explorers

The first wave of using blockchain data was explorers, they provide an easy way to search and look at relevant transaction data on the blockchain. We have seen this rise from simple explorers which show transactions, such as Etherscan, moving to fledged Web 3 search engine like Neeva.xyz.

Examples: Etherscan, Neeva.xyz, Blockchair, Blockchain.com Explorer

Dashboards

Players like Dune and Nansen are creating platforms to easily build analytics dashboards with ease, here users can easily build and track tokens using data visualisation tool.

Examples: Dune, Nansen, TokenTerminal

Advanced Data Tools

Other tools like Metrika and Amberdata provide more advance data analytics tools on top of the traditional web3 data stack aimed at enterprise grade users.

Examples: Metrika, Amberdata, Chainalysis Business Data

The Web 3 Data Stack

Given the stack above we can define a new data stack for Web3 builders. Here we see node access provide the tooling for extraction of blockchain data, with ‘indexers’ storing and indexing blockchain data to make it easily transformed for data practitioners for use in their application.

Questions for users?

What level of decentralisation do you need?

We have seen an increasing trend of providers wanting to provide decentralised and centralised services, but what is better? Traditionally, full decentralisation has been the mantra of web3 business so every part of the stack can be run and hosted by an end user. However, the practicality can be slower service with variable pricing, which is infeasible when building a best-in-class user products. Given this, to what extent do you need data decentralised at the analysis and implementation level? Is a good question to ask. If data is immutable and decentralised at the blockchain layer i.e. if you can trust a verified shared ledger this data is correct, do you need the data analytics layer to be decentralised as well? As by doing this you can sacrifice performance of your solution.

How does this work with existing solutions?

Whilst it is interesting to imagine a fully decentralised world, in an existing world where user accounts from centralised applications will still be needed. Given this, we eventually will see a world where we need a data stack to incorporate both these futures. We are already seeing this with indexing solutions like Luabase and Allium allowing the mixing of on-chain and off-chain data.

If we take a look at this full data stack diagram from A16Z below, we notice a plethora of middleware tools for managing data.

As we enter a world where blockchain data becomes apart of a data stack for many businesses. I can envisage more data middleware to be built to help practitioners manage the vastness of user insights and data a global shared ledger allows us to collect. As the ecosystem grows, best practices of managing a decentralised data stack will emerge with the emergence of better products for customers.

I am excited to see how companies approach managing and building robust data pipelines for products in the future.

If you are a Web3 Data practitioner or building in the space, please reach out and say Hi! I’d love to learn more about what you are doing. Feel free to reach me by replying to this email or DM on Twitter or LinkedIn, I’d love to chat! 🙏